If you’re a content designer, or someone responsible for the words in a product, you want to use language your users recognize and understand. One way to do that is to look at the language they use themselves, in places like social media, forums and reviews.

Analyzing user-generated content can help you quickly check assumptions and get supporting evidence for decisions, especially when time or budget for user testing is limited.

At university, I studied English language and linguistics, and I discovered techniques from corpus linguistics (a way of analyzing collections of “real-life” language) are also useful for getting insights from user-generated content at scale. In this post, I’ll share techniques I used in a recent project that helped me make better terminology recommendations—before we ever tested with users.

Sourcing user-generated content

Before you can start to analyze language, you’ll need to create a corpus of user-generated content. A corpus is a large collection of text, normally from a particular source or on a specific topic.

If you have a relatively small amount of user-generated content, you might want to create a general corpus of all your customer language. Or, if you have a large volume of customer content available, you could narrow it down to be relevant to your project. To do this, I find it’s helpful to do some initial research to work out what topics to look for. You don’t want to do a very narrow search as you’ll just end up with text that exactly matches what you searched for.

For example, when I wanted to learn about how users talk about lead scoring (a way of prioritizing leads for sales) I searched for:

- adjacent terms or synonyms our users were using, like ‘prospect score’

- branded terms, for example, ‘HubSpot score’

- competitor terms for the same things that our users might be using, like ‘lead grade’

You can find user-generated content to analyze in a variety of public and internal sources.

Public sources

Social media

Depending on what kind of business you’re in, you might find your users on Reddit, Twitter or LinkedIn.

Twitter might not be as active as it once was, but it still has a powerful advanced search that you can use to find relevant tweets mentioning your product or area of interest.

Forums

Are there discussion forums where your users typically hang out? Here’s some tips for how to find relevant forum discussions. We’re lucky at HubSpot that we have an active community feedback forum.

Reviews

Reviews on your own website or third-party sites can be a rich source of customer language.

Internal sources

A word of caution on internal confidential sources: analyzing data on external tools, consider security and data privacy laws. For this reason, I’m only using public sources in my examples.

Customer service

Customer service transcripts can be a goldmine of customer language. You might be able to find useful content from chat bot exchanges, emails or call transcripts.

Sales calls

Thanks to advances in generative AI, it’s become easier to reference valuable transcripts from sales calls, where potential customers talk directly about your products and features.

User research

Another good source is transcripts from research calls or verbatim text from open-ended survey questions.

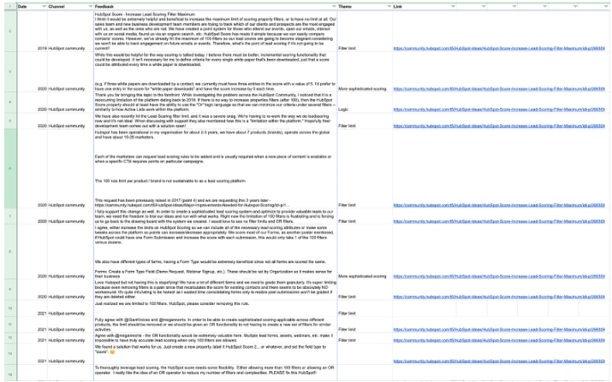

After sourcing text, you’ll want to bring it all together in a spreadsheet or plain text file for analysis. I like to keep text in a spreadsheet with the source, date and URL in separate columns, in case I want to reference it later. You’ll only need the text column for the analysis, but having the extra information can be helpful, for example, if you want to quote the verbatim text in a report.

Example user-generated spreadsheet. You only need to paste the column with the text (feedback) into an analysis tool.

Tools to analyze user-generated content at scale

Once you have your corpus, you can start to analyze it using linguistic or AI tools. Here are 3 to try:

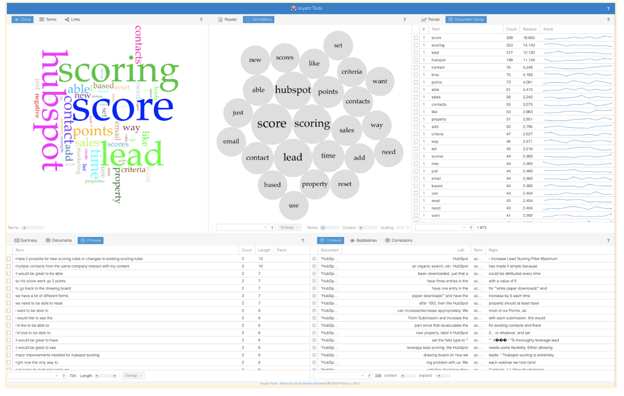

Voyant tools

Voyant tools is free, and you don’t need to sign up.

To analyze your text, paste in your corpus text or a list of URLs, and it'll give you the analysis straight away.

You can visualize the words and phrases in your corpus in various ways. You can also drill into words and links between them.

Example analysis of lead scoring text from Voyant tools

Example analysis of lead scoring text from Voyant tools

.png?width=420&height=374&name=image%20(11).png) An example of links between lead scoring terms from Voyant tools

An example of links between lead scoring terms from Voyant tools

Not got any text to analyze yet? Have a play around with the Jane Austen corpus.

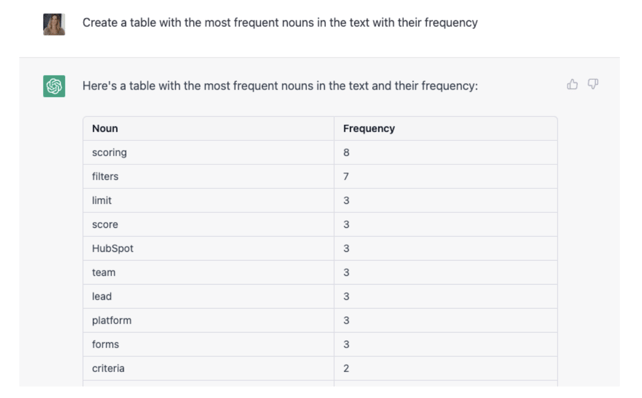

AI tools

Large language models are trained on a corpus, and they can also be valuable tools for analyzing user-generated content.

Generative artificial intelligence tools like ChatGPT have a fairly short character limit, but can be useful and fun to try for small amounts of text! The memory of AI tools is rapidly increasing, so the restrictive character limit probably won’t be around for long. After pasting your text into the chat you can ask for different outputs such as frequency and collocates (words that frequently appear together).

Table of frequency of nouns in the text generated by ChatGPT

Table of frequency of nouns in the text generated by ChatGPT

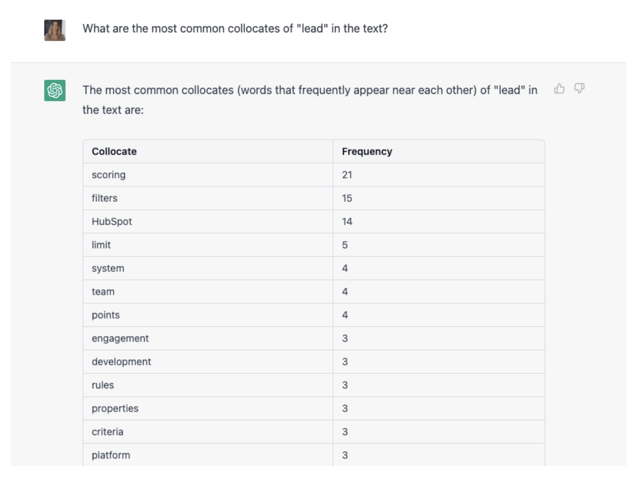

Table of collocates of "lead" in the text generated by ChatGPT

Table of collocates of "lead" in the text generated by ChatGPT

When using tools like ChatGPT, it's worth repeating the reminder to only upload non-confidential content that’s already publicly available.

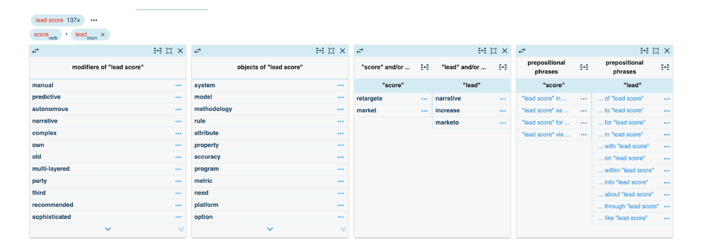

Sketch engine

Sketch Engine is a paid-for tool that offers powerful language insights. Like Voyant tools, you can upload either a text file or a list of URLs, and then you can run detailed analysis on the corpus. The ability to drill into how specific words and phrases are used is particularly useful.

Example of a view from Sketch Engine of modifiers, objects, and prepositional phrases of "lead score"

Example of a view from Sketch Engine of modifiers, objects, and prepositional phrases of "lead score"

Insight to inform design

Here are some of the ways you might want to use this analysis to inform your design decisions.

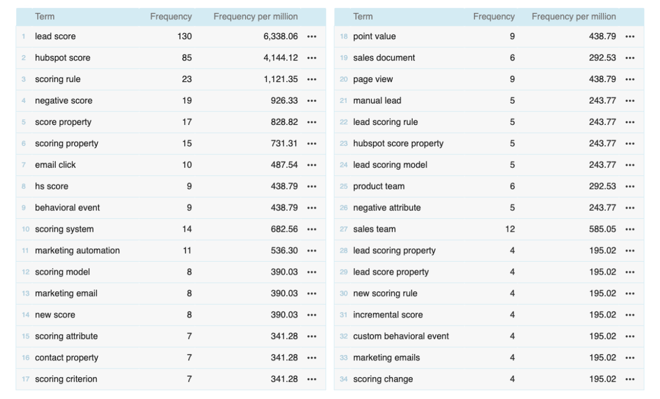

Frequency

Frequency of words and phrases can help you when deciding between terms to use, and evidencing your decisions.

Frequency of words and phrases in the text from Sketch Engine

Frequency of words and phrases in the text from Sketch Engine

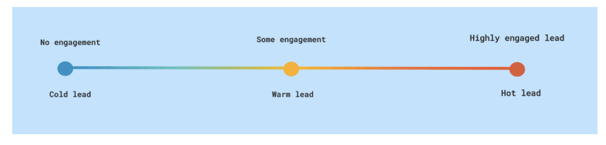

Collocates

Collocates are words that frequently occur together more often than would be expected by chance. For example, common collocates of coffee would be words like cup, strong, and hot. Collocates can help you build a better understanding of your users’ mental models.

Both Voyant and Sketch Engine automatically show collocates. You can find them under correlations in Voyant and word sketch in Sketch Engine.

For lead scoring, some common collocates of ‘lead’ were warm, cold, and hot. Users were using a temperature scale to explain the level of engagement of a lead.

Visualization of how customers think about temperature in relation to leads

Visualization of how customers think about temperature in relation to leads

Once you’ve found common collocates, you can dig into the verbatim text to add color and detail to the mental model. For instance, users talked about leads being ‘warm enough’ and ‘cooling down’.

Nouns and verbs

Similarly, finding the nouns your users are using tells you about the objects in their mental models, while the verbs give you insight into the tasks your users are trying to complete. For example, users were likely to talk about building and updating a lead score.

.png?width=334&height=424&name=image%20(12).png) Example from Sketch Engine of verbs with "lead score" as object

Example from Sketch Engine of verbs with "lead score" as object

Knowing the frameworks your users are using means you can make sure that the structure of the content you’re designing matches what they expect.

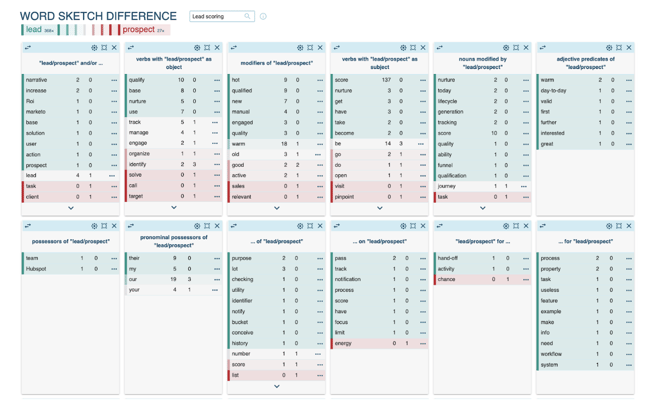

Compare terms

When deciding between two terms, it can be helpful to analyze how users are using both terms. With Sketch Engine, you can build comparisons of terms that give you deep insight into user language.

Word sketch from Sketch Engine, showing the difference between the terms lead and prospect

Word sketch from Sketch Engine, showing the difference between the terms lead and prospect

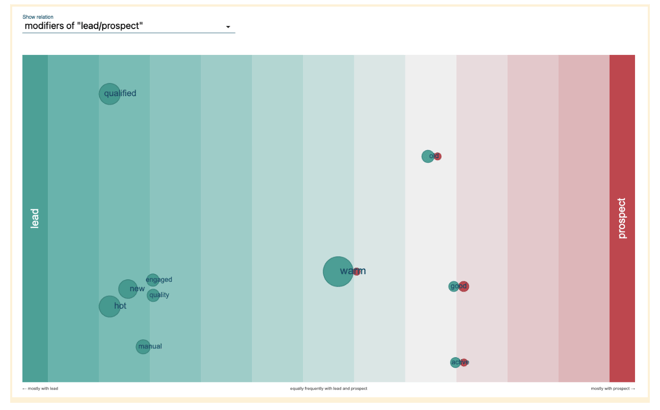

Example visualization from Sketch Engine of the frequency of use of modifiers of lead and prospect

Example visualization from Sketch Engine of the frequency of use of modifiers of lead and prospect

In summary, analyzing your users’ content can help you make more strategic, user-centered design decisions. Even if you don’t have time or resources to test with users, there’s tools you can use to get helpful insights into the language they use and understand. It also gives you powerful evidence to back up your decisions, helping you to work faster and keep your team connected to the end user.

Do you want to help us solve challenges like this? We're hiring - check out our Careers Page for your next opportunity! And for a behind-the-scenes look at our culture, visit our Careers Blog or follow us on Instagram @HubSpotLife.