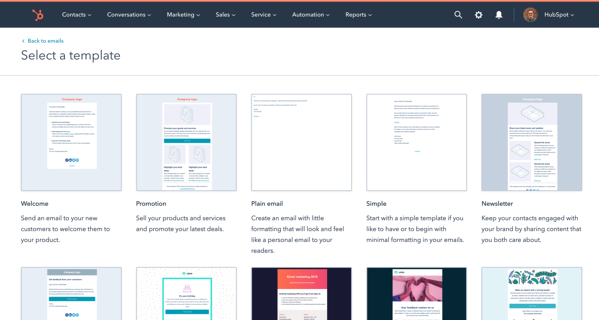

Back in July, HubSpot released free email marketing tools on top of our already free CRM. As part of these email tools, every portal gets 20 beautiful templates that our Content Design Assets and Email teams designed. These templates can be customized with your content in the drag and drop editor before sending them out.

Email templates are just one of many default assets that HubSpot provides to make content creation easy for our customers. There are a handful of different data models that we use to represent the building blocks of the content platform (Templates, Custom Modules, Themes, etc.), and these models are shared across HubSpot. Knowledge Base, Email, and the Content Management System, just to name a few, all use data types stored in the content platform.

Because we have these shared building blocks, we also get to benefit from a shared delivery system. There’s a bunch of processing and nuances to the system, but at its heart, it’s just copying the data model from one row in a database table to another. So regardless of whether you’re buying a new pack of landing page templates from the Marketplace or copying a Custom Module from one of your portals to another, it’s all the same back end: the same delivery mechanism.

Our asset delivery system works really well when, for example, a developer at a small business builds a template in a developer staging portal and wants to copy it to the production portal. Conversely, when HubSpot builds a new template and wants to deliver the exact same template to 900,000+ portals, it can lead to all sorts of scaling issues. Copying the exact same data to 900,000+ rows of a table all at once can result in a lag on the database replicas, cache invalidation issues with our CDN, etc. Not to mention that it’s a waste of processing time and energy. It’s the same template everywhere, why do we need 900,000 copies of it? Lastly, it’s slow. Delivery of all 20 templates to all 900,000+ portals could take hours, sometimes days. HubSpot development moves fast, we have dozens of microservices deploying at any given time. We want our Content Design teams to be able to build a new template and see it in production as soon as it’s ready, just like the rest of our deployables.

Earlier this year, with the launch of our free email tool approaching, we knew we’d be delivering to way more portals. This system had to change, and fast, so the CMS Assets I/O team set out on a mission to re-architect and build a scalable asset delivery system.

At HubSpot, we love our open source tools. We’ve open sourced a number of our systems (including Singularity, our scheduler for running Mesos tasks, and Jinjava, our Jinja templating engine for Java). So when we started looking at redesigning this system, we loved the idea of Hollow, a Netflix-built open source tool to propagate read-only datasets in-memory.

Hollow follows a basic producer-consumer model. We serialize the content object down to raw JSON and the producer puts it into a topic. Consumers can listen to the topic and when the topic updates, they pull in the newest version of the JSON, re-serialize it, and update their copy of the object with the new version. Hollow is built to be highly scalable by automatically de-duplicating data and optimizing for heap space. It can handle data sets on the order of hundreds of GB, which never would have been an option to store in memory before. Hollow handles data on this scale by calculating the differences in the data whenever the producer receives an update, and only pushing a payload containing the delta of information to the consumers. All this to say that Hollow was the perfect tool for this job.

Of course, a complete system migration had to be handled carefully; millions of emails depend on these default assets. All of this re-architecting had to be done without our customers, or our customer’s customers, even noticing. We started by adding a data layer that could decide whether an API request should pull from Hollow or from MySQL. However, now we were maintaining identical data in two separate places. So we built in alerts whenever there was a difference between the database and in-memory. We built in switches to turn off Hollow in case of an emergency, and created fallbacks to pull from the database if a template were missing from Hollow entirely.

Once the pieces were in place, we started slowly allowing customer requests to actually pull from Hollow. We used our feature gating system to provide it to 5% of customer portals, and over the course of a week we rolled it out to everyone. Once it was ungated to all, we saw something incredible; template delivery with ZERO database writes. What used to take hours was now instantaneous.

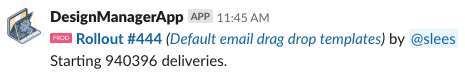

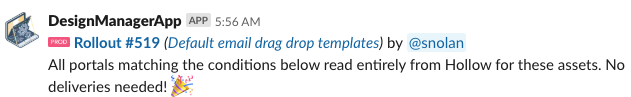

Our team has automated Slack notifications whenever a default asset gets changed. We saw our notifications on production systems go from this:

To this:

We could now safely add and change templates, and actually see the work live in production when we pushed it. We’ve extended Hollow to include many more data types from our content platform, and we’re excited to see what else this tool can do.

If this sort of work interests you, or you have more questions please reach out! We’re always looking for talented engineers to help us grow our CMS system.